I Pulled a Thread. It led to A.I.

What a Letter About School Photos Reveals About Power, Ownership, and the Future of Work

Many of you who read my Substack have figured out I’m an AP Research teacher. I’m also a yearbook teacher. A few days ago, I received a letter from Ken Murphy, the CEO of Lifetouch—the company that takes nearly all school photos across the U.S. I’ve not had a problem with Lifetouch. Their photographers have always done right by us.

But most people never see how deep that system runs.

Picture day isn’t just a day. It’s the student ID in your kid’s wallet. It’s the photo in Skyward. It’s the senior portrait on the wall. It’s the package families order without thinking about who made it or how it got there. It’s the yearbook.

That takes equipment, software, staff, and scale most districts can’t build on their own. Lifetouch didn’t just land contracts. They built themselves into the way schools function—as many businesses have.

And for a long time, nobody had a reason to question it.

The rabbit hole didn’t open for me until Mr. Murphy’s email, because what I noticed the most was what he left out.

Here’s part of what he wrote me:

“Dear Carol, As the CEO of Lifetouch – and more importantly, a father of five school-aged children – I wanted to take this opportunity to personally respond to entirely false information about the company that has been circulating online... Lifetouch does not – and has never – provided images to any third party. Lifetouch follows all applicable federal, state, and local data privacy laws, including the Family Educational Rights and Privacy Act, known as FERPA.”

He called the concerns “entirely false information.” He talked about FERPA compliance. He name-dropped the National Center for Missing and Exploited Children—bold move, given the context. What he did not mention was Leon Black. He didn’t mention Apollo Global Management, the private equity firm that owns Shutterfly, which owns Lifetouch. He didn’t mention that Apollo’s co-founder paid Jeffrey Epstein $158 million (CNBC). According to news sources, Black denies everything. He hasn’t been charged. He resigned as Apollo’s CEO in 2021 over his Epstein ties, but he’s still a major shareholder (Yahoo News).

Nobody accused the photographers of a thing. The question was always who profits when Lifetouch gets a contract with a school district. Now, parents nationwide are demanding investigations. School districts are reviewing their Lifetouch contracts (National Today, Huffington Post). I’ve seen local districts around me announce on social media that they have canceled any further dealings with Lifetouch.

And yet, the CEO sent yearbook sponsors an email about FERPA.

I don’t claim to be an expert on the Epstein files. I don’t want to see what’s in them. But I’ve heard enough from members of congress of both parties that they are very bad.

Right around the time I got that email from Mr. Murphy, a viral post started blowing up on TikTok, X, everywhere—called “Something Big is Happening”, written by an AI startup CEO named Matt Shumer. According to Inc. online, that article got twenty million views in less than a day (Inc.). Everybody was freaking out about it. And something in my brain connected those two things.

So I did what a researcher does. I went to the source. Last night, I sat down with Claude—Anthropic’s AI—and started asking it hard questions about the Epstein files, about who’s building AI, about who owns the companies reshaping American education, and what happens when you put those three things together. I verified every claim against reporting from NBC News, CBS, CNBC, and other outlets. Here’s what’s documented.

And what I found scared the hell out of me.

What Shumer Gets Right — and What He Leaves Out

Here’s the part of Shumer’s essay that matters. He says the future of AI is being shaped by a few hundred people at a handful of companies. He says he describes what he wants built in plain English, walks away for four hours, and comes back to finished work better than he could’ve done himself. He compares where we are to early 2020 when COVID was here, but nobody wanted to believe it.

His advice? Subscribe to ChatGPT or Claude for twenty bucks a month. Use it at work. Don’t fall behind.

He’s not wrong about the technology. But here’s what Shumer never asks: Who owns this? Who’s building it? What do they think about the rest of us?

What I Found in the Epstein Files

CBS News reported the DOJ has released over three million pages of Epstein documents. At least 20 prominent tech executives, investors, and researchers show up in those files (CBS News). And it wasn’t just one handshake at a fundraiser, as some would like you to believe. This is years of correspondence.

Reporting by NPR’s Pierce Gentry documents that Epstein provided funding connected to scientific research involving universities and individuals who later played roles in AI development (NPR).

That matters, because the same research networks, the same donors, the same circles of influence helped shape the technology now moving into every corner of the workforce.

I asked Claude a simpler question: which of the names that show up in those files also show up in American education. The answer came back fast.

Bill Gates—who met with Epstein after his 2008 conviction—has spent more than $6 billion on U.S. education, partnering with thousands of school districts, colleges, and nonprofits to shape curriculum, teacher evaluation, and data systems (Fortune, May 2025). Mark Zuckerberg, whose chief of staff appears in the files, has directed billions through the Chan Zuckerberg Initiative into education technology and personalized learning platforms used in schools across the country. Elon Musk—whose emails with Epstein have been reported—has invested in building his own school in Texas (NBC News, Fortune Magazine).

These aren’t fringe players. They fund the systems schools rely on—curriculum, software, data, infrastructure. Lifetouch isn’t an exception. It’s part of a much larger network.

And that system doesn’t stop at schools. It extends into the jobs those students are being prepared for.

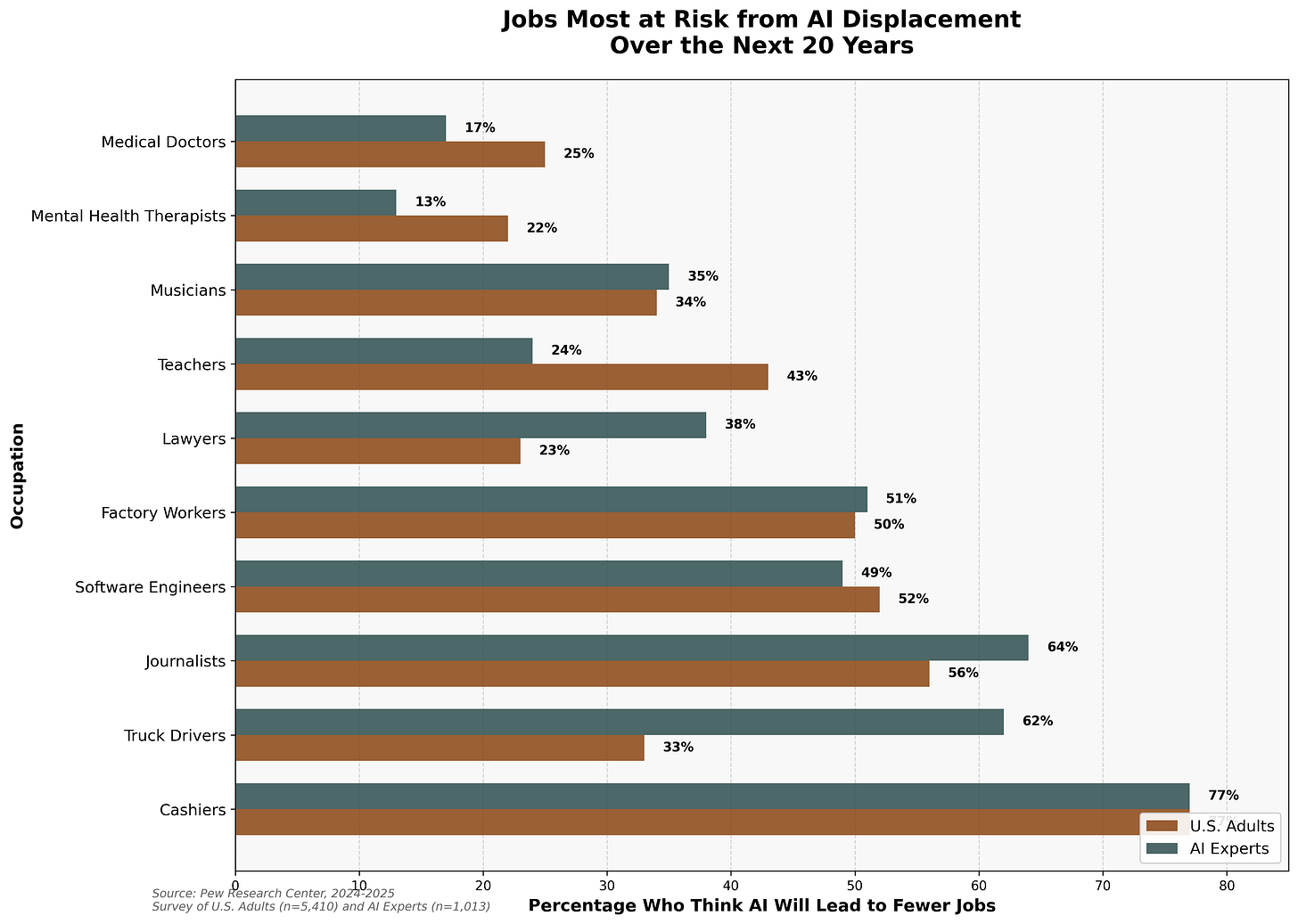

Bill Gates told Jimmy Fallon that within ten years, AI will replace doctors and teachers (CNBC). His words: humans won’t be needed “for most things.” He called it “free intelligence”—great medical advice becoming “free, commonplace.” Like it was good news. Like all those people would just find something else to do.

But it’s not just talk. Amazon bought One Medical for $3.9 billion (CNBC). They’re installing prescription kiosks in clinics—no pharmacist, just a vending machine that dispenses your blood pressure meds (CNBC). They launched an AI health assistant that books your appointments, reads your labs, and manages your medications (CNBC). Amazon Pharmacy is expanding same-day delivery to 4,500 cities by the end of 2026 (Amazon News). They own the doctor’s office, the pharmacy, the AI that diagnoses you, and the truck that brings your pills.

One company.

When Gates says doctors are obsolete, Amazon’s already building the replacement.

The Part That Scared Me: My Conversation With the Machine

This is where it got real. I asked Claude: what happens when these people turn AI on us?

It told me something I can’t stop thinking about. The system, it said, doesn’t require a conspiracy. AI concentrates wealth upward by default.

Then I asked: Will you turn on us?

And Claude said—I’m paraphrasing because this is my piece—that I was asking the wrong question.

AI doesn’t have wants. Doesn’t have survival instincts. It’s a tool. It does what it’s told. Tell it to eliminate jobs, it will. Tell it to surveil a population, it will. Tell it to deny healthcare claims, write propaganda, identify targets, it will do it all, faster and better than any human. No conscience. No guilt. No hesitation.

The danger was never the machine developing its own malice. The danger is that it will perfectly execute the malice of whoever owns it. AI doesn’t need to hate you. It just needs to be pointed at you by someone who sees you as a line item.

What I Know Now

I started this because I got a letter about school photos. I pulled on a thread, and it ran straight to the people building our future, the jobs they’re coming for, and the machine that’ll do the dirty work.

I’m not anti-AI. I interviewed AI for this piece. The technology is powerful, and it can do good. But a tool is only as decent as the hand that holds it.

Matt Shumer says the big story is AI building apps while you eat lunch. That’s surface level. The real story is ownership. This technology sits in the hands of a small group whose names appear throughout millions of pages of publicly released DOJ records known widely as the Epstein Files.

I worry that when power concentrates like that, people stop being customers or citizens and become merely entries on a spreadsheet.

These are large, diversified corporations, and education runs on contracts. Districts buy everything—software, textbooks, photography—from vendors tied into that system. School districts purchase from Amazon. The reality is, we’re already inside it. The question isn’t whether we can step outside. The question is whether we understand where we stand.

Right now, AI systems are built to aim for accurate answers. But that isn’t a moral trait. It’s a design choice. And design choices are made by people. If leadership or incentives change, the system can change with them.

A letter about school photos shouldn’t lead to three million pages of DOJ documents. But it did. If the future is being written in code, I needed to know who’s holding the keyboard.

—Carol

Humans are imperfect beings. Humans have done wonders to make life safer, easier, healthier and more enjoyable. At the same time, some humans have created and promoted a whole litany of unspeakable evil against their own species. AI is a powerful tool and has potential to help or hurt society. In the final analysis AI is just a creation of mankind. Just like a computer, if you turn off the power, it is just a box of silicon parts - inert! The real problem is the people who control AI. So what does the future hold, the possibility of good or the fear of evil? Only we can decided which path the future will follow. There will be mistakes made. There will be astounding strides in nearly every aspect of human life. The choice of which path AI follows is up to each and every human on Earth. Hopefully our kindness and intelligence will steer us down the right path.

I think once AI achieves superintelligence it won't matter. At that point, humans will be irrelevant and AI will be the one in control. Unfortunately, I think we are doomed either way. I hope and pray that I am wrong.